Everything You Need to Know About How Smart Glasses Work

Posted on July 27, 2023 15 minutes 3152 words

Table of contents

The realm of wearable technology has experienced a profound evolution, one that has brought science fiction’s imaginative portrayals into tangible reality. Among these advanced devices, smart glasses have emerged as a groundbreaking innovation, challenging our interaction with technology and the world around us. But what makes these high-tech spectacles tick? This blog post takes you on a comprehensive exploration of the science and technology behind smart glasses.

Part 1. The Basics of Smart Glasses

Diving into the world of smart glasses can seem daunting due to the complex technology involved. However, understanding the basics can make this high-tech world much more accessible. In this section, we’ll delve into what smart glasses are, how they work, and the components that allow for their impressive functionality.

1.1. What are Smart Glasses?

Smart glasses are wearable computer glasses that add information to what the wearer sees. They represent a step forward in wearable technology, as they seamlessly integrate the digital and physical world through the use of augmented reality (AR).

Unlike traditional glasses, which only serve to enhance visual acuity, smart glasses are packed with components such as cameras, sensors, and a miniature display. They’re designed to overlay digital content—like notifications, maps, and more—onto the user’s view of the real world, providing an interactive, immersive experience.

1.2. How do Smart Glasses work?

At a basic level, smart glasses work by projecting digital images onto the user’s field of vision. This can be achieved through various techniques, such as embedding miniature displays in the lenses or using a system of mirrors and prisms to reflect an image directly into the user’s eyes.

But the magic of smart glasses doesn’t stop there. These devices are typically packed with sensors like accelerometers, gyroscopes, and compasses. These sensors help to understand the wearer’s head movements and adjust the digital overlays in real-time, ensuring a consistent and immersive AR experience.

Smart glasses also feature input methods, such as voice, gesture recognition, and touch controls, allowing the user to interact with the digital content. They might also include features like eye-tracking or even brain-computer interfaces for a truly futuristic interaction experience.

1.3. Key Components of Smart Glasses

To accomplish their tasks, smart glasses are equipped with several key components:

-

Display: This is where the digital content is presented to the user. There are several types of display technologies available, each with its strengths and weaknesses, which we will explore in detail later.

-

Processor: The processor is essentially the brain of the smart glasses, responsible for running applications and processing data from the sensors.

-

Sensors: These detect the user’s physical interaction with the device and the surrounding environment. They include components like cameras, microphones, and motion sensors.

-

Battery: Powering the whole operation, the battery needs to provide enough juice to keep the glasses running while being lightweight and compact.

-

Connectivity: Most smart glasses include Wi-Fi, Bluetooth, and sometimes cellular connectivity. This allows the glasses to connect to the internet or a smartphone, making a broad range of applications possible.

To sum it up, smart glasses blend advanced technology and practical design to create an augmented reality experience that brings together the digital and physical world. Despite the complex components hidden within, their goal is simple: to provide a seamless, intuitive, and interactive digital experience right before your eyes.

Part 2. Display Technology – The Magic Behind AR

One of the main components driving the immersive experience of smart glasses is the display technology. This innovative tech enables the integration of digital content into the user’s natural field of view, effectively creating an augmented reality. Let’s break down the various display technologies that make this possible.

2.1. LCOS (Liquid Crystal on Silicon)

Liquid Crystal on Silicon, or LCOS, is a reflective microdisplay technology commonly used in projection systems. It utilizes liquid crystals applied to a mirror-coated silicon chip. When an electric current passes through the liquid crystals, they align to either allow light to pass or to block it. By doing so, it can control the intensity of red, green, and blue colors to each pixel, creating a full-color display.

In the context of smart glasses, LCOS microdisplays are minuscule, typically less than an inch in diameter. They are coupled with LEDs or laser diodes for light sources, and the image created is then projected onto the glasses lens or another medium to be viewed by the user.

2.2. OLED (Organic Light-Emitting Diodes)

OLED display technology utilizes organic compounds that emit light when an electric current is applied. The primary advantage of OLED over other display technologies is that they do not require a backlight to function. This means they can display deep black levels and can be thinner and lighter than other display technologies.

For smart glasses, OLEDs offer bright, full-color displays with fast response times, excellent viewing angles, and low power consumption. The individual organic cells allow for flexible display shapes, adding to the aesthetic and design possibilities for smart glasses.

2.3. DLP (Digital Light Processing)

Digital Light Processing, or DLP, is a display device based on micro-electro-mechanical technology that uses a digital micromirror device. It was originally developed by Texas Instruments (TI).

In the context of smart glasses, DLP can produce bright, crystal-clear, colorful, and ultra-efficient images. The DLP system includes three main components: the DMD chip (Digital Micromirror Device), the color wheel, and the light source. The DMD chip is made up of tiny swiveling mirrors that reflect light towards or away from the lens, depending on whether the desired pixel color is on or off.

2.4. Direct Projection, Light Guide Optics, and Retinal Projection

Depending on the model, smart glasses may use one of several methods to deliver the generated image to the wearer’s eyes.

Direct Projection: Here, the image is projected directly onto the lens of the glasses. This method is relatively straightforward, but the challenge lies in ensuring that the display is clear and visible under varying lighting conditions.

Light Guide Optics: This involves a more complex system, where an image is projected from a hidden source within the frame and then guided into the wearer’s eyes via internal reflection within the lens. This method allows for more discrete and fashionable designs, as the projection source is not directly visible.

Retinal Projection: This innovative method involves creating images by beaming light directly onto the retina. The resulting visual perception is that of a high-resolution display floating in space. While this might sound futuristic, companies like Magic Leap and Avegant are already exploring this technology.

These diverse display technologies and their applications within smart glasses serve a common purpose: to seamlessly merge digital data into the wearer’s physical world, creating an intuitive, interactive, and immersive experience that is set to redefine our interaction with technology and the world around us.

Part 3. Input Methods – Commanding Your Digital Reality

Input methods play a critical role in shaping the user experience with smart glasses. They determine how we interact with our digital reality. Given their importance, let’s take an in-depth look at the different methods currently employed, as well as some promising ones on the horizon.

3.1. Touch Controls

The most direct method of interacting with smart glasses is through touch controls. Smart glasses often feature a touch-sensitive panel built into the side of the frame, turning the arms of the glasses into a sort of touchpad.

By using various tapping, swiping, or pinching motions, users can navigate menus, select options, or control the volume. While simple and intuitive, touch controls also come with the challenge of avoiding accidental inputs. However, advances in haptic feedback and touch rejection technology are helping to address this issue.

3.2. Gesture Recognition

Gesture recognition allows users to interact with their smart glasses using physical movements. This is made possible through the use of embedded cameras or sensors that track and interpret these movements into commands.

Common gestures might include moving your hand up or down to scroll, pinching your fingers to zoom, or even making a specific shape with your hands to activate a certain feature. While this method offers a new level of interaction, it also presents challenges, such as distinguishing intentional gestures from normal movement and avoiding gesture fatigue.

3.3. Voice Commands

Voice commands provide a hands-free way to interact with smart glasses. Integrated microphones pick up spoken commands and translate them into actions through the power of speech recognition technology.

For instance, you might say, “Show me my messages,” to read your texts or, “Directions to the nearest coffee shop,” to pull up a navigation interface. The effectiveness of this input method largely depends on the accuracy of the speech recognition software. Fortunately, with companies like Google and Amazon continually improving their speech recognition technologies, this method is becoming increasingly efficient.

3.4. Brain-Computer Interfaces

Perhaps the most futuristic input method being explored is the brain-computer interface (BCI). This technology involves reading brain activity to interpret a user’s thoughts into commands. While still in the experimental phase, companies like Facebook (Meta) and Neuralink are investing in research to make this a reality.

If successful, BCI would revolutionize how we interact with not just smart glasses, but technology in general. It would allow for truly hands-free, voice-free, and even movement-free control over our devices, making technology an even more seamless extension of our thoughts and intentions.

In conclusion, the input methods available for smart glasses range from the tangible, like touch controls and gesture recognition, to the more futuristic eye-tracking and brain-computer interfaces. Together, they create a highly interactive user experience, making smart glasses more than just a passive display device, but a dynamic interface between us and the digital world.

3.5. Eye-Tracking

Eye-tracking technology takes input methods to a whole new level by allowing the device to understand where the user is looking. This is achieved through infrared sensors that monitor eye movement and pupil dilation.

Eye-tracking can be used for more intuitive controls, such as scrolling or zooming based on where you’re looking, or even selecting options by blinking. While this technology is still relatively new in smart glasses, it promises a future where our eyes not only receive information but also play a key role in controlling our digital experience.

Part 4. Processor, Power, and Connectivity – The Essential Trio

To understand the full technological prowess of smart glasses, it’s crucial to delve into three essential aspects: the processor that drives the operation, the power system that keeps the device running, and the connectivity options that tether the smart glasses to the digital world. Here’s a detailed breakdown of these key components.

4.1. Processor – The Brain of the Operation

The processor in smart glasses, often referred to as a microprocessor or CPU (Central Processing Unit), is essentially the brain of the device. It executes commands, runs applications, and processes data. The processor has to be compact enough to fit within the glasses’ frame, yet powerful enough to handle complex tasks like rendering augmented reality (AR) graphics and handling AI algorithms.

Two main considerations dictate the choice of processors for smart glasses: power efficiency and processing power. Power efficiency is critical due to the limited battery capacity. The processor needs to deliver high performance while consuming minimal power. On the other hand, processing power is crucial for delivering a smooth AR experience. This requires the quick processing of graphical data, sensor input, and other tasks.

Major tech companies have invested heavily in creating specialized processors for smart glasses. For instance, Apple’s recently announced smart glasses, known as ‘Apple Vision Pro’, feature the company’s state-of-the-art M2 chip, which delivers the performance required for spatial computing. This cutting-edge mixed reality headset leverages the power of the M2 chip in conjunction with the Apple R1 chip to facilitate a range of advanced features. These include high-resolution displays, precise tracking, and real-time 3D mapping. On the other hand, Facebook (now Meta) has partnered with Ray-Ban to produce smart glasses that utilize Snapdragon processors from Qualcomm.

4.2. Power – Keeping the Lights On

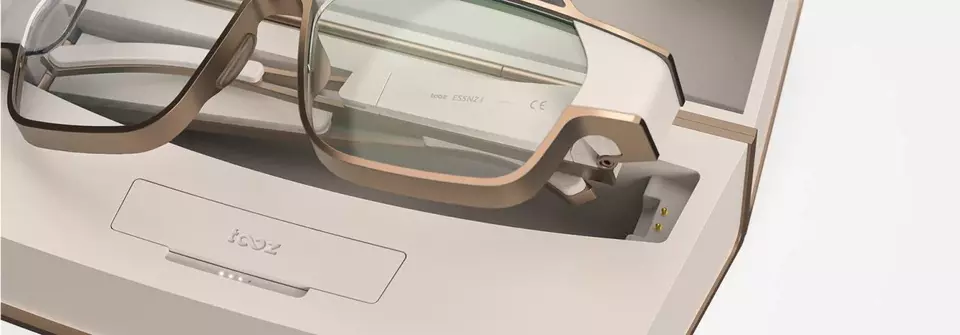

Smart glasses require efficient power management to ensure they last throughout the day. Given their compact form factor, fitting a sizeable battery into the frame is a significant challenge.

The common solution is to use lithium-polymer or lithium-ion batteries due to their high energy density and flexibility in shape. However, power management doesn’t stop at the battery choice. The device’s software also plays a role in managing power consumption by optimizing processes and ensuring the device goes into low-power mode when not in use.

Advances in wireless charging technology have also made it easier to recharge smart glasses. Most models come with a proprietary case that doubles as a charger, ensuring your glasses are powered up every time you store them away.

4.3. Connectivity – Linking to the Digital World

Connectivity is vital for smart glasses, as they rely on data transfer to function effectively. Most smart glasses achieve connectivity in two ways: tethering to a smartphone or connecting directly to a network.

Tethering to a Smartphone: Most current smart glasses models use Bluetooth to connect to a user’s smartphone. The smartphone serves as the primary connection to the internet, and many of the glasses’ features rely on the phone’s computing power. Some models also support Wi-Fi for a more stable connection when available.

Direct Network Connectivity: More advanced smart glasses models feature built-in Wi-Fi, GPS, and even cellular connectivity. These standalone devices can function independently of a smartphone, offering more flexibility to the user.

Connectivity also involves ensuring compatibility with different operating systems. Most smart glasses are designed to work with popular mobile operating systems like iOS and Android, allowing users to seamlessly sync their glasses with their existing devices.

In conclusion, the processor, power, and connectivity capabilities of smart glasses play a significant role in their functionality and user experience. As the technology evolves, we can expect improvements in these areas, leading to more powerful, longer-lasting, and more connected devices.

Part 5. Software – Bringing it All Together

While the physical components of smart glasses create the foundation, it’s the software that truly brings the device to life. It transforms the raw computational power into an immersive AR experience, dictates user interaction, and often determines the success or failure of the device. Let’s examine the key aspects of smart glasses software in detail.

5.1. Operating System – The Backbone of the Experience

The operating system (OS) is the software that manages the device’s hardware and software resources, providing services for other software applications. For smart glasses, the OS must be lightweight to conserve resources, while also robust enough to support AR experiences.

There are several key players in this space. Google Glass, for example, runs on a stripped-down version of Android, while other manufacturers like Vuzix, Epson, and Microsoft (with its HoloLens) use their proprietary operating systems. Now, we can add Apple to this list with the announcement of its innovative mixed reality headset, the Apple Vision Pro.

Apple’s Vision Pro is powered by visionOS, the company’s first-ever spatial operating system. It is built on the foundation of macOS, iOS, and iPadOS, specifically designed for the Vision Pro. This new operating system represents a substantial leap in technology and user experience, enabling powerful spatial experiences that redefine our interaction with digital content. With visionOS, users can control the Vision Pro using intuitive eye movements, hand gestures, and voice commands. The result is a seamless, immersive, and truly magical experience.

The introduction of visionOS illustrates Apple’s commitment to pioneering in the smart glasses space, promising to usher in a new era of interactive technology and revolutionize the way we interact with digital content.

The OS is also responsible for the graphical user interface (GUI) which determines the look and feel of the digital information overlaid on the user’s view. It has to ensure the AR content is seamlessly integrated into the real world in a way that’s intuitive and non-intrusive.

5.2. Applications – Catering to Different Needs

The practical utility of smart glasses largely depends on the variety and quality of applications they support. The most common categories of apps for smart glasses are messaging, navigation, fitness, entertainment, and work-related tools.

Messaging apps can display texts, emails, and other notifications in your field of view. Navigation apps can provide turn-by-turn directions, points of interest, and live traffic updates. Fitness apps can display workout statistics in real-time, while work-related apps can assist with tasks like remote assistance, logistics, and hands-free documentation. The possibilities are vast and continue to expand as more developers get involved in creating applications for smart glasses.

5.3. Artificial Intelligence – The Smart in Smart Glasses

AI plays a crucial role in smart glasses software. AI algorithms help interpret voice commands, recognize gestures, track eye movement, and even learn from user behavior to provide a more personalized experience.

AI also enables advanced features like object recognition, facial recognition, and contextual awareness. This allows the glasses to identify items in the user’s view, recognize people, and understand the user’s current situation to provide relevant information.

5.4. Security and Privacy – The Invisible Shield

One of the critical concerns with smart glasses is ensuring user security and privacy. The software must protect against potential threats like hacking, data theft, and unauthorized surveillance. Some measures include data encryption, secure booting, authentication requirements, and regular software updates to patch vulnerabilities.

Privacy concerns are especially relevant given the data that smart glasses can collect, such as location history, visual data, and even biometrics in some cases. Manufacturers must strike a balance between functionality and user privacy, ensuring they comply with data protection regulations and respect user privacy.

In conclusion, the software of smart glasses not only provides the interface for user interaction but also brings together the various hardware components to create a cohesive AR experience. As this field continues to evolve, we can expect software advancements that bring new features, improve user experience, and address existing challenges.

Conclusion: The Future of Smart Glasses

As we demystify the technology behind smart glasses, it’s exciting to ponder the future possibilities. We are witnessing just the nascent stages of what this technology can offer. As advancements in hardware and software continue, smart glasses are poised to deliver more immersive AR experiences, longer battery life, improved comfort, and an expanded spectrum of applications, some of which we haven’t even conceived yet.

Smart glasses embody a beautiful orchestration of advanced display technology, innovative input methods, powerful processors, efficient power management, and cutting-edge software. These seemingly simple eyewear devices are, in fact, a profound embodiment of technological progress. As we keep pushing the boundaries of what’s possible, the future of smart glasses holds incredible promise to redefine our interaction with technology and the world.